Post Conference Note

IREG Forum in Warsaw – a post conference note

(Warsaw, 18 May 2013) Over 130 experts on university rankings and representatives and academic community from 32 countries met in Warsaw 16-17 May 2013 at the IREG Forum on University Rankings – Methodologies under scrutiny. The conference was organized by the IREG Observatory on Academic Ranking and Excellence and the Perspektywy Education Foundation in cooperation with the Polish Academy of Sciences.

Opening the Forum Jan Sadlak, President of IREG Observatory pointed out to the usefulness of university ranking as information, assessment and transparency tool. In the keynote speech Michal Kleiber, President of the Polish Academy of Sciences stressed the importance of good data and quality information both for rankings and for the higher education. Forum’s sessions were chaired by: Andrea Bonaccorsi (Italy), Waldemar Siwinski (Poland), Nian Cai Liu (China), Klaus Huefner (Germany) and Alex Usher (Canada).

The underlining philosophy of the Forum in Warsaw was that rankings can be only as good as the methodologies behind them are. Consequently both presentations and discussions focused on various aspects of methodologies used in making of rankings. The timing for discussion on this feature of ranking was perfectly chosen considering a number of important new initiatives emerging in the ranking field like U-Multirank in Europe (presented by Gero Federkeil) or Folha’s University Ranking in Brasil (presented by Sabine Righetti).

Another specific feature of the IREG Forum was special session with panelists representing institutions from several countries with different higher education systems. The panelists Sergey Tunik (Saint Petersburg University), Stanislaw Kistryn (Jagiellonian University), Lars Uhlin (Lund University), Mukash Burkitbayev (Al-Farabi University), Mircea Dumitru (University of Bucaresti) and Ladislav Nagy (University Babes-Bolyai) commented rankings as they are seen from the university’s perspective. The organizers introduced this session to give voice to those who are subject of rankings but rarely have a chance say what they think about the rankings, how they asses rankings’ positive and negative sides.

An active role in the Forum program played a group representing Polish universities, including Marcin Palys, Rector of University of the Warsaw and Wladyslaw Wieczorek, Vice Rector of the Warsaw University of Technology. The two Warsaw universities also hosted the Conference Dinner. The Frederic Chopin Institute was Forum’s partner. Elsevier BV publishing house was the sponsor of the IREG Forum.

Over the past three years IREG Observatory has worked on the idea of ranking audit and at the Forum the first ranking audit results were announced. Two rankings, national and international, Perspektywy University Ranking (Poland) and the QS World University Rankings were granted the right to use the quality certificate “IREG Approved”. Jan Sadlak, IREG President and Klaus Huefner, IREG Audit Coordinator handed the certificates to Ben Sowter of the QS Inteligence Unit and Waldemar Siwinski, President of the Perspektywy Education Foundation.

IREG Observatory on Academic Ranking and Excellence is an association of ranking organizations, universities and other organizations, interested in the improvement of the quality of rankings and quality of higher education in general. IREG Observatory was established in 2009. The number of its members have grown from nine to 34 since then and new applications are pending.

The IREG Observatory and QS Intelligence Unit invite to the IREG-7 Conference Employability and Academic Rankings – Reflections and Impacts, to be held in London, 14-15 May 2014.

Additional information: k.bilanow@ireg-observatory.org

Introduction and Invitation

IREG Forum on Ranking Methodologies

The growing importance of national, regional and global university rankings attracts attention of ever larger group of stakeholders who show interest in how these rankings are made. They also realize that what each ranking tells or does not tell depend on the criteria used. Methodology is like a mirror, we can see in it only as much as the methodology allows.

What is methodology? According to the classic definition: method is a planned way of doing something. Methodology on the other hand is a set of methods used for study or action in a particular subject, as in science or education.

Ranking methodology represents a broad spectrum of methods; it covers philosophy behind a ranking (what makes a great university?), methods of collection and processing of data, and the way results are published. The desired and recommended set of principles that should be applied while preparing academic rankings were presented in 2006 as the IREG Berlin Principles on Ranking of Higher Education Institutions. According to the Berlin Principles every proper metodology should include the following key areas: purpose and goal of a ranking, design and weight of indicators, collection and processing of data, and presentation of the results.

The areas specified by the Berlin Principles are still valid today, however, the growing availability of data related to research and higher education contributes to fast changes in the data analysis and ranking methodologies. It is a high time for a thorough discussion on the evolution of ranking methodologies for the sake not only of ranking analysts but also for the benefit of the stockholders who want to understand the advantages and the shortcomings of various rankings.

The IREG Forum on Ranking Methodologies to be held in Warsaw, Poland 16-17 May 2013 will concentrate on discussion and analysis of various ranking methodologies. It will examine methodologies of the main international and national rankings. A particular attention will be paid to the ways of overcoming the limitations some methodologies are currently facing. One of the sessions will be devoted to the theoretical aspects of econometrics, bibliometrics and statistics used in ranking. There also will be time for presentation of methodologies employed in the new academic rankings under preparation in various regions of the world.

Authors of every ranking face a number of critical questions: How to construct the methodology? How to implement the methodology? How to collect and use the data? When and how to present the results? How to correct the errors? What lessons are learned from errors? The Forum in Warsaw will seek answers to those and other ranking related questions.

The IREG Forum in will be hosted and organized by Perspektywy Education Foundation and IREG Observatory on Academic Ranking and Excellence as well as other partners.

Organizers

Programme

Thursday, 16 May 2013

8.30 – 9.00 Registration

9.00 – 10.00 Opening Session

Welcome and opening remarks from:

Waldemar Siwiński, President, Perspektywy Education Foundation; Vice-President of IREG Observatory

Jan Sadlak, President of IREG Observatory

Keynote speech:

Michał Kleiber, President of the Polish Academy of Sciences (PAN):

Quest for good data and quality information: Imperative for good policy and functioning of higher education

10.00 – 11.30 First Session: Methodological Challenges in Conducting University Rankings and Institutional Classifications

Chair: Andrea Bonaccorsi, University of Pisa, Member of the Board of the National Agency for the Evaluation of Universities and Research Institutes (ANVUR), Italy

Speakers:

Wu Yan, Center for World-Class Universities, Graduate School of Education, Shanghai Jiao Tong University, China:

Global Research University Profiles: Case study of interactive ranking platform of the Academic Ranking of World-class Universities (ARWU)

Bob Morse, Director of Data Research, US News & World Report, USA:

How to secure reliable data

Ben Sowter, Head of Division, QS Intelligence Unit, Great Britain:

A refined approach to global university rankings by discipline Isidro F. Aguillo, Head, Cybermetrics Lab, the Spanish National Research Council (CSIC), Spain:

Combining Webometric and bibliometric information: Web Ranking methodology under scrutiny

Discussion

11.30 – 12.00 Coffee Break

12.00 – 13.30 Second Session: New Rankings and New Methodological Approaches

Chair: Waldemar Siwinski, President, Perspektywy Education Foundation, Vice-President of IREG Observatory, Poland

Speakers:

Gero Federkeil, Vice-President of IREG Observatory; Manager in charge of Rankings, Center for Higher Education, Germany:

U-Multirank: From testing to practicing of international multi-dimensional methodology

Lubov Zavarikina, the National Training Foundation, Russia:

Key aspects and methodology for university ranking in Russia (as developed by the National Training Foundation)Philippe Vidal, Professor, and Ghislaine Filliatreau, Director, OST – Observatoire des Sciences et des Techniques, France:

Positional impact of reputational surveys in global university rankings

Sabine Righetti, Rogeiro Meneghini, Helio Szhwartsman, Folha de S.Paulo, Brazil:

Brazil’s first university ranking: A closer look at RUF (Folha’s University Ranking), its methodology and its results

Discussion

13.30 – 15.00 Lunch

15.00 – 16.30 Third Session: How University Ranking Relates to Other Approaches for Quality Assurance and Sources of Information

Chair: Nian Cai Liu, Vice-President, IREG Observatory; Director, World-Class Universities Study Centre, Graduate School of Education, Shanghai Jiao Tong University, China

Speakers:

Benoit Millot, Lead Education Economist, The World Bank, Washington:

Ranking universities versus benchmarking tertiary education systems: Why different methodologies lead to similar results?

Fatih Omruuzun, Graduate School of Informatics, Middle East Technical University, Turkey:

Usability and interface design evaluation of major gateways to science literature: Scopus and Web of Knowledge

Serban Agachi, Professor, Babes-Bolyai University; President of the Association of Carpathian Region Universities (ACRU), Romania:

Procedures and experience in verification of data on higher education institutions in Romania

Michaela Saisana, Scientific Officer, Joint Research Centre (JRC) of the European Commission, Italy:

Quality criteria for data aggregation used in academic rankings

Discussion

16.30 – 16.45 Coffee Break

16.45 – 18.15 Fourth Session: New Look at Policies, Methodologies and Practices of University Rankings

Chair: Klaus Hüfner, IREG Audit Coordinator, Germany

Speakers:

Alex Usher, President, Higher Education Strategy Associates, Canada:

New approaches to ranking research output: The experience of HESA’s Measuring Academic Research in Canada project

Jocelyne Gacel Avila, Researcher and General Director for Internationalization at the University of Guadalajara, Mexico:

The global rankings debate in Latin American higher education: A critical approach

Maja Jokic, Kresimir Zauder and Marina Matesić, Institute for Social Research, Croatia:

The importance of national ranking of universities and research institutions: Experience of “small” countries

Niko Hyka, Avni Meshi, Edmond Mino, Public Accreditation Agency for Higher Education; and Dafina Xhako, Departament of Engineering Sciences,University “Aleksander Moisiu”, Durres, Albania:

Improving the ranking model of the Albanian higher education institutions

Discussion

19:30-21:30 Conference Gala Dinner

Venue: Centralna Biblioteka Rolnicza (Agricultural Library)![]() , Krakowskie Przedmiescie 66

, Krakowskie Przedmiescie 66

Friday, 17 May 2013

9.30 – 11.20 Fifth Session: Ranking Methodologies: Universities’ Experience and Expectations

During these session panelists representing leading universities will reflect and comment on set of pertinent issues related to university rankings such as;

– which and why university rankings are relevant for your university;

– what is your experience with ranking organizations [prior and after ranking is produced];

– what suggestions do you have for the ranking organizations to improve methodology and presentation of rankings;

– does (if yes in which way) university rankings has influence on governance and administration of your university.

Chair: Wojciech Bieńkowski, Professor, Lazarski University, Poland

Speakers – representatives of universities (Members of IREG Observatory):

Sergey Tunik – Saint Petersburg State University, Russia

Stanisław Kistryn – Jagiellonian University, Poland

Lars Uhlin – Lund University, Sweden

Mukhambetkali (Mukash) Burkitbayev – Al-Farabi Kazakh National University, Kazakhstan

Anne Marie Cassidy – University of Navarra, Spain

11.20 – 11.50 Coffee Break

11.50 – 13.00 Special Session and IREG Audit

Chair: Alex Usher, President, Higher Education Strategy Associates, Canada

Lisa Colledge, Director Snowball Metrics Initiative, Elsevier, the Netherlands:

Snowball Metrics: A new method of benchmarking of research-related activities

Klaus Hüfner, Coordinator of IREG Ranking Audit, Germany and Carla Cauwenberghe, the

Dutch Inspectorate for Education, the Netherlands, Auditor of IREG Ranking Audit

IREG Ranking Audit: First observations and lessons learnt

13.00 – 13.30 Closing Session and Final Discussion

Chair: Jan Sadlak, President of IREG Observatory

Also a presentation by host organization: QS Quacquarelli Symonds of IREG-7 Conference: Employability and Academic Rankings – Reflections and Impacts, 14-16 May 2014, London, United Kingdom

13.30 – 14.30 Farewell lunch

14.30 – 16.00 IREG Observatory General Assembly (closed session)

Optional post-conference events and sightseeing

Organizers

Program Committee

- Jan Sadlak, President, IREG Observatory on Academic Ranking and Excellence, Co-Chair

- Michal Kleiber, President, Polish Academy of Science, Co-Chair

- Liu Nian Cai, Vice-President, IREG Observatory; Director, Center for World-Class Universities, Shanghai Jiao Tong University, P.R. China

- Waldemar Siwiński, Vice-President, IREG Observatory; Perspektywy Education Foundation, Poland

- Gero Federkeil, Vice-President, IREG Observatory; CHE Center for Higher Education, Germany

- Marek Rocki, President, Polish Accreditation Committee

- Bob Morse, US News & World Report

Organizing Committee

- Kazimierz Bilanow, Managing Director, IREG Observatory on Academic Ranking and Excellence

- Wojciech Marchwica, Perspektywy Education Foundation

Hotel&Optional

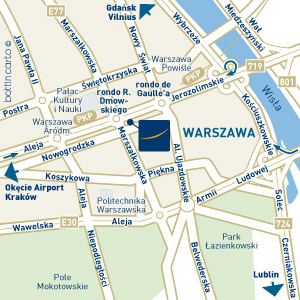

Novotel Centrum Warszawa

ul Marszalkowska 94/98

00-510 WARSAW

POLAND

Tel (+48)22/5960000

Fax (+48)22/5960647

E-mail: H3383@accor.com

Special rates for IREG Forum participants use form below

Optional Tourist Attractions

If, in addition to participating in the IREG Forum, you wish to explore Warsaw and other Polish cities, below you will find suggestions of a variety of guided tours such as: Warsaw City Tour, Fryderyk Chopin Tour, Jewish Heritage Tour, Krakow Excursion etc. We are sure they will be an interesting addition to your stay in Poland.

Photo Gallery